Nvidia LPDDR memory: Nvidia is making a bold move that could shake the AI hardware industry. The company plans to switch a significant portion of its AI server chips to LPDDR memory, the same low-power memory used in smartphones. At first, this seems counterintuitive. AI servers are massive and require enormous memory capacity, far beyond what smartphones need. But the key reason behind this shift is power efficiency. Running AI servers 24/7 consumes huge amounts of electricity, and LPDDR memory uses far less power than traditional DDR5 server memory.

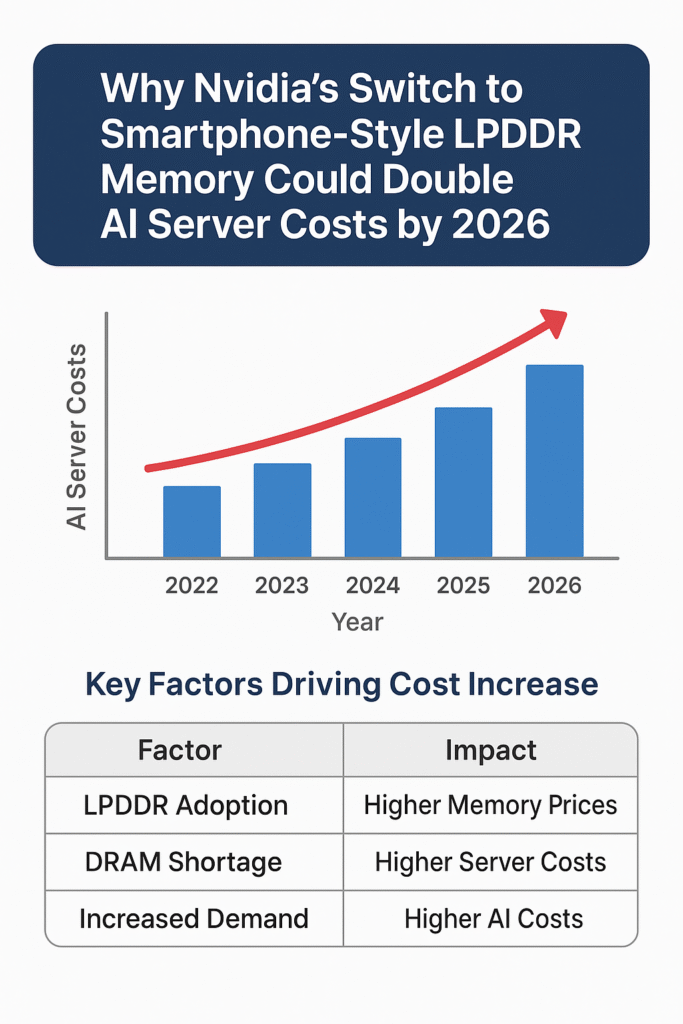

While this move helps reduce energy costs and cooling requirements, it comes with a significant risk. The demand for LPDDR in AI servers could far outstrip its supply, as the memory was never produced at scale for data center use. Analysts predict that limited production capacity combined with skyrocketing demand could push LPDDR prices — and AI server costs — to double by 2026. This shift will affect cloud providers, startups, and even end-users relying on AI applications.

According to a report by Reuters, Nvidia’s shift to smartphone‑style LPDDR memory chips for its AI servers could cause server‑memory prices to double by late 2026, posing significant cost pressures for cloud providers and AI developers.

The Memory Crunch Explained

| Factor | Current Situation | Impact of LPDDR Shift |

|---|---|---|

| Memory Type | DDR5 for servers | LPDDR (smartphone-grade) |

| Power Usage | High | Low (energy-efficient) |

| Supply | Adequate for servers | Limited; built for phones |

| Price Trend | Steady | Likely to double by 2026 |

| Cooling Requirement | High | Lower |

| Data Center Impact | Standard | Could increase total cost of AI servers |

This table illustrates why the LPDDR shift is not just a minor engineering tweak but a structural change affecting the entire AI ecosystem.

Why LPDDR Matters for AI Servers

LPDDR’s advantages are clear: it uses less electricity and produces less heat. This reduces the burden on cooling infrastructure in data centers. However, AI workloads demand massive memory capacity, which LPDDR was not originally designed to handle. The limited supply may create a bottleneck, causing memory costs to surge. Cloud providers like AWS, Google Cloud, and Microsoft Azure will likely be the first to feel the impact, followed by startups and smaller AI developers.

Memory suppliers like Samsung, SK Hynix, and Micron are already prioritizing high-bandwidth memory (HBM) for advanced AI GPUs. Adding LPDDR to the mix could stretch their manufacturing capabilities even further. The resulting scarcity may lead to higher prices not just for LPDDR but also for traditional DDR5 memory used in consumer PCs and laptops.

Economic Implications

If memory prices double, the total cost of AI servers could increase dramatically. Every AI server consists of GPUs, CPUs, memory, cooling, and power infrastructure. Memory is a large part of the overall cost. Rising memory prices will affect AI model training, inference, and even cloud service fees. Startups may struggle with increased operating costs, while major AI firms will need to adjust budgets and pricing strategies.

The Domino Effect

AI has already caused waves in the tech industry, first with GPU shortages and then with rising electricity demands. Now memory shortages could be the next domino. This creates a scenario where increased costs may trickle down to end-users, affecting access to AI tools and applications. Even consumer electronics could see price increases as manufacturers adjust to the memory crunch.

A Silver Lining

While rising memory costs pose challenges, they could also drive innovation. Higher prices may incentivize new memory technologies, more efficient AI servers, and greener data center designs. In the long term, these changes could lead to smarter, more sustainable AI infrastructure.

For readers who want more context on AI hardware and cloud infrastructure, you can check out Cloudflare outage insights and OnePlus 15 innovation for related trends in tech supply chains.